Overview

I've been pretty enthusiastic about the potential for AI, keeping up with latest releases and gradually growing usage of GitHub Copilot into my daily workflow since early last year. Last year, using independent agents to aid in my development process, I derived significant time savings from instructing individual instances of Claude Sonnet to do things like refactor files, write tests, or explain small-scope changes. I'd frame this as something of a 20% savings in time, and generally improved visibility over my workplace's production infrastructure.

Up till this point, AI usage was extremely focused; given a problem, all the tasks of development were still user-side, with end to end project management done by me but with assistive agents for individual subtasks. Sonnet was good at writing functions and perhaps fanning out to retrieving <10 files of context; anything more and the context degradation would significantly erode its output quality.

This degradation essentially prevented anything but the most simple 'loops' from achieving liftoff velocity, as models get worse as their contexts bloated up. It was still pretty great, mind; just limited workflows to a very user-in-the-loop back-and-forth style of development.

This got significantly overhauled with a step function leap in agentic capabilities in November/December 2025.

My experience has been pretty much in line with these claims. I'll have to give credit to these primary reasons:

- Model evolution: The generation of (Claude Opus 4.5 and Claude Sonnet 4.5, ChatGPT's 5.1) tippoed over a threshold of usability

- Harnesses: Codex finally became usable, and Claude Code mature enough, that the experience using these CLIs with the latest-gen models above started becoming pleasant; often being even better than IDE development

Up till this point I hadn't thought it possible that my workflow would curve to the terminal so drastically; I'd been a staunch IDE user prior, using vim primarily only for investigative workflows and VSCode/IntelliJ for development otherwise. However, in the past two months, I've found that my IDE has been almost relegated to be simply a text-viewer, with development being primarily driven through agentic sessions on my terminal.

Since I started going all-in on AI usage about 2 months ago, my workflow has rapidly evolved; I'm writing this post to write check-in notes on my thoughts so far.

General Principle: Scale, scale scale

It's useful to think in terms of derivatives when looking at the landscape of AI-driven development. Let's use movement of objects as an analogy:

- Position, : The code that is generated, underlying the software running in our systems day-to-day.

- Velocity, : The speed at which we write code, with all its ensuing requirements (testability, reliability, visibility, etc. etc.).

- Prior to AIs, the speed of writing reliable code was a strong marker for the productivity of an engineer. This directly impacts their ability to deliver on projects.

- How did we improve this before? Tooling (IDEs, terminal optimization, bash script familiarity, documentation clarity) and library familiarity to abstract away large modular subsets of work into repeatably reusable components.

- Acceleration, : The speed at which we grow faster at writing code.

- Prior to AIs, this was an implicit factor in the ability of communities to grow; network effects of things like language popularity (Python's explosive growth).

- How did we improve this before? Mentality (keeping an open mind, always being eager to learn more tooling), working in ecosystems with large open source communities

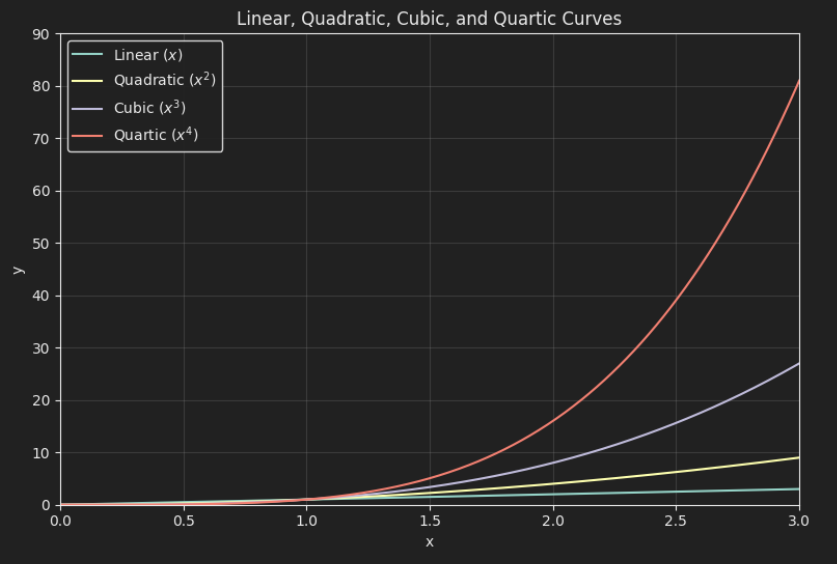

So on, and so forth. These are quadratic/cubic/quartic curves, and every step on each ensuing curve has a massive impact on the output. Each independent layer has a multiplicative effect, so you can multiplicatively stack two quadratic curves to get a quartic effect.

Derivatives, for thee: How is Anthropic 10x-ing their revenue every year?

Now let's frame this in the LLM landscape:

Code generated per year = Total LLM data center inference capacity (assuming they are running at full capacity)

Where data center capacity is a function of:

- Rate of growth of data center buildout, which has positive velocity and positive acceleration, constrained by energy availability and customer demand

- Tokens-per-watt efficiency of models

- Retained code-per-token is a function of the quality of the output of the model. Also note that model capabilities in general enable use cases which further enables demand.

In essence, the equation is

Given the equations, it is easy to see why we are seeing a runaway explosion in code generation. Multiple factors are compounding:

energy-availableis compounding: Solar energy is getting exponentially cheaper- Not independent, so I'll file this as a subset of energy:

number-of-hardwareis compounding (exponentially for now): Data center growth per year

- Not independent, so I'll file this as a subset of energy:

code-per-tokenis compounding: Models are getting exponentially more capable at translating token output into usable codewatt-per-hardwareis (at least linearly) improving: Research like TurboQuant are allowing larger models to fit into the same size of hardwaretoken-per-wattis (at least linearly) improving: LLaMA analyses- Caveat: Based on private provider pricing this doesn't seem to be the case, given how later models of GPTs and Claudes are more expensive than the last; but there are confounding factors here like volume of hardware needed, which make it hard to estimate tokens-per-watt from provider pricing

We have numerous compounding effects combined with commercial usefulness (supplying capital availability/market demand) that unlocks the immediate vertical-upwards-line sigmoid part of the innovation adoption curve.

Derivatives, for me

Given the arsenal of developments improving the landscape happening right now, we ourselves have our own levers that we can pull to add further multiplicative effects to the efficacy of our code generation. Assuming an LLM can generate code 100x faster than a human:

- Using LLMs: We go from 1x to 100x amount of code, multiplied by (code efficiency factor) (say it's 95% inefficient; that's 5x code left)

- Using tools improves the capabilities of LLMs, improving efficiency:

- Open source/commercial tooling improves year-on-year (positive velocity, positive acceleration)

- Claude Code, Opencode, Codex

- Protocol upgrades (introduction of agent skills, MCPs)

- Leverage inherent within protocols (skills maturing over time, skills that write skills, etc.)

- Open source/commercial tooling improves year-on-year (positive velocity, positive acceleration)

- Frameworks that better orchestrate and instruct LLMs further improves efficiency of LLMs

- More directed work in line with user intent

- Rigorous testing for fewer iterations

- Horizontal scaling (subagents) adds a linear multiplier to individual user productivity

It hence becomes paramount that any developer in this AI adoption curve leverages the factors within our control. It's also important to work on things that are orthogonal to external factors, so our work today is similarly extendable to the models and infrastructure of tomorrow.

The Phenomena

Other industries aside; what are some downstream effects of this massive commoditization of software?

Jevons Paradox is almost a certainty

Software is going to be made so much cheaper. While on paper this might sound bad for software engineers, I think if it as more like analogous to textiles; we went from hand-sewed textiles, to factory-made mass-produced textiles with machinery. Thousands of use cases out there are constrained today because the cost of building and maintaining software is out of a company's reach; in future, software will become far more accessible, and all the automations that ensue make for a very promising future

Each software engineer now has the veritable capability of becoming a software factory by himself, with the right skillset; we can earn 10x less per line of code, but build 100x more things. I'm anticipating a future where $10K/year in AI tooling can deliver productivity gains that once required a full-time hire.

AI Fatigue

With the massively increased ability to generate code, people have observed significant increased fatigue from daily driving AI workflows

I have seriously observed this myself; coding for the entire day has been the norm for me, being my day job and all; but driving my LLM workflow throughout the day to achieve a given mega-set of features leaves me feeling drained and needing a break within hours. It's not necessarily a bad thing; just a phenomena I'm personally surprised by, and one that I'm learning to manage.

The dynamic of code generation vs usage has been flipped

Prior to Jan 2026, the implementation complexity of many of the things I'd wanted to build had been the major blocker for my personal projects. Today, that has flipped to validating the things I do build. Of my personal-time budget, requirements have gone from (90% development, 10% UX) to (20% development, 80% UX); I spend more time now validating and interacting with my personal projects than building them out. Many bugs aren't bugs in a trivially discoverable sense; some bugs can simply be intended API workflows wired up in a different order, or the wrong color being used. Validating user preference is now the core constraint, meaning user-testing of LLM-generated code is now one of the key quality gates towards effective products.

Conclusion

We are at the very beginning of a very, very wild ride: one that has been exhilirating to me so far as a developer primarily concerned with building for the sake of product and not for the experience of coding. Scale, scale, scale is the mandate that drives the industry and developments today; these simply sum up some of my personal efforts to scale alongside the scaling ecosystem we are in today.

Appendix - Projects in the past 2.5 months

- Site Rewrite: One of the first things I did was to rewrite my site, which had been a messy Vue.js setup that hadn't been worked on in ~5 years.

- My Property Hunt

- Butlers - My Personal Jarvis

- Recurisve self-improvement of My AI Setup

- Tze HUD - Still a big WIP!